AutoRhythm

Syllable-level rhythm correction for rap vocals. Every syllable on beat. Your voice, untouched.

Lyrics

Audio Pipeline

1Backing Beat

The instrumental track everything syncs to

2Human Vocal (Raw)

Original rap vocal — off-beat timing, unprocessed

3AI Guide (Full Mix)

ACE-Step 1.5 generates a rap vocal from the lyrics over a backing track

4AI Guide (Vocals Stripped)

Demucs isolates just the vocal — this becomes the timing reference

5Corrected Human Vocal

Every syllable time-warped to match the AI guide's rhythm — zero-sample anchor error. Skip to ~14s for the vocals.

6Raw Vocal + Beat (Comparison)

Original uncorrected vocal over the backing track — hear the timing drift

7Final Mix

Corrected human vocal layered over the backing track

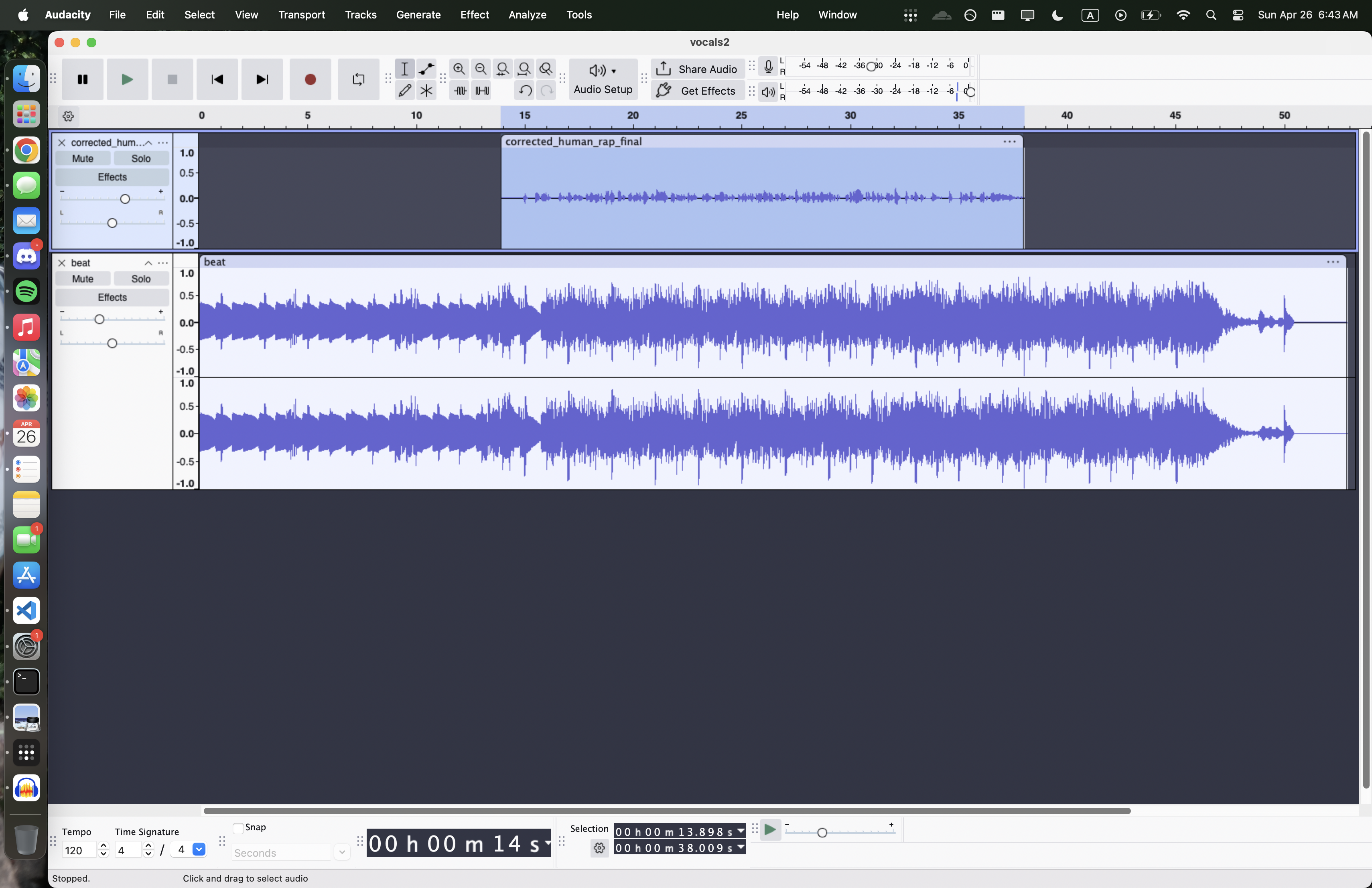

Audacity Session

The pipeline outputs a full Audacity session with visible clips, labels, and waveform tracks.

Syllable Alignment Viewer

110 syllables aligned between human vocal and AI guide. Each block is one syllable region.

Anchor Map

Every syllable's human timing vs. guide timing. Delta shows how far each syllable was shifted.

| # | Syllable | Human Onset | Guide Onset | Delta | Confidence |

|---|

How It Works

9-phase pipeline. AI handles guide generation and alignment. Everything after that is fully deterministic.

Resample to 48 kHz, mono for analysis

ACE-Step generates a vocal from lyrics, Demucs isolates it

CMUdict + G2P maps lyrics to canonical syllables

Montreal Forced Aligner extracts phone-level timestamps

Map each human syllable to the guide's timing

Safe-boundary scoring finds clean split points

Piecewise time-warp specification for every segment

Rubber Band pitch-preserving time-stretch

Clips, labels, and tracks via mod-script-pipe

Key Features

Zero-Sample Precision

Every rendered anchor lands exactly on the guide anchor at the integer sample index. Mathematically exact.

Voice Preservation

The human voice is only cut and time-stretched. Never regenerated or transformed by AI.

Transparent Editing

Full Audacity session with visible clips, labels, and waveforms. Every edit is inspectable.

Two Modes

Guide mode uses an AI vocal as reference. Beat-only mode snaps to the detected beat grid.

Built With

- Python

- ACE-Step 1.5

- Demucs

- Montreal Forced Aligner

- CMUdict + g2p_en

- Rubber Band

- librosa

- Flask

- pywebview

- wavesurfer.js

- Audacity

- NumPy

- SciPy

- PyTorch